Following the earlier article on what you get when you buy a Medtrum A6 CGM, let me precipitate this piece by mentioning that it contains only a day of data. It is the second 24 hours of use of both a Dexcom G5 Sensor and the Medtrum A6, and it was taken from a roughly twelve hour period where my poor finger tips suffered multiple tests to see how both the Dexcom G5 via xDrip+ and Medtrum A6 compared against one another and blood testing. It is also an n=1 experiment, so any conclusions drawn should be taken with this in mind.

Introduction

For blood testing (and calibration) I used a Contour Next One device, which the Diabetes Technology Society recently reckoned as being the most precise and accurate on the market. In addition, given the statements that accuracy of the calibration instrument is incredibly important in getting good CGM results in papers such as this, I wanted to give both Medtrum and Dexcom the best possible chance.

Also, to be clear here, I am using both sensors “off label”. I have tried numerous CGM sensors on my midriff and found them to lose accuracy and fail well inside the manufacturer stated life. As a result, they are now always attached to my upper arms, similarly to the Libre. In general, I have found this provides better accuracy and longer life. As always, your experiences may vary.

Going into this, the stated MARD for both systems is 9%. Now whether this is Median or Mean, I can’t tell, but based on the single day’s data, I’ve calculated an equivalent for both. Why do I say “An Equivalent”? I neither have access to, nor own, a Yellow Springs Instruments Venous Plasma testing device, so instead of the term MARD, I’ve used “Variance from Blood” or “VfB” for short. This is is because although the numbers generated using this method use the same calculation, they don’t use the same base data, so are likely to vary from true MARD.

The technique used is very simple. Blood test (SMBG) every 20-30 mins throughout the day and take the preceding sensor reading for each system, and record it all in an Excel sheet. All data was captured, in a Euglycemic range, between 4mmol/l and 8mmol/l.

So then, into the data, and first, how the systems performed against each other…

Results

Sensor Data versus SMBG

As can be seen from the 12 hours of data in the following graph, there is quite a significant amount of variability:

The first graph simply shows blood tests versus sensor data. What we can see is that the Dexcom tracked the SMBG readings much more closely in this 12 hour period. Initially, in the early part of the run, the Medtrum seemed to be following SMBG well, but as soon as we saw an inflection in the graph and glucose levels started to drop, the lag in the Medtrum readings appeared to increase and its accuracy fell.

Throughout the middle of the test period, there is a noticeable lack of reversion back to the blood testing values which isn’t as evident in the Dexcom/xDrip numbers, which seem to track better. It’s only post lunch, once the glucose values return to the high sixes to eight that Medtrum seems to align once more.

Given the variance of values shown in the first graph, I calculated the VfB for each SMBG data point. The Dexcom/xDrip+ line in orange maintains a value throughout the day that for the most part falls below 10%. On the other hand, the Medtrum A6 varies dramatically as glucose levels fall, rise and then fall again. Blood glucose levels are shown in grey against the right hand axis for reference.

For both sensors, the Median and Mean values are shown below:

| Medtrum A6 | Dexcom G5/xDrip+ | |

| Mean VfB | 12.4% | 5.7% |

| Median VfB | 10.7% | 4.8% |

Analysis

For further analysis, I’ve used the Surveillance Error Grid, as defined here. It provides a similar view to the Clarke Error and Consensus Error grids, indicating changes in risk as readings move away from the central line. I’m using it here in its descriptive form given the smallish number of data points.

Dexcom G5/xDrip+ versus SMBG

The Dexcom data shows a reasonably tight spread compared to the reference BG value, indicating somewhere between none and slight risk of hyper- or hypoglycaemia. In line with the VfB numbers that were generated from the data points, this doesn’t come as a huge surprise and suggests you should see few unexpected highs or lows in the euglycaemic range when using a Dexcom G5 with xDrip+.

Medtrum A6 versus SMBG

Compared to the Dexcom data in the first grid, there’s clearly a lot wider dispersion on the Medtrum. The majority of data points fall into a broader “slight” risk category and suggest that the sweetspot for Medtrum is an SMBG reading in the 120mg/dl-140mg/dl (6.5mmol/l-7.8mmol/l) zone. Having said that, they appear to fall into the mostly green zone. To give some idea on the impact of this dispersion, I’ve looked at a few other error graphs.

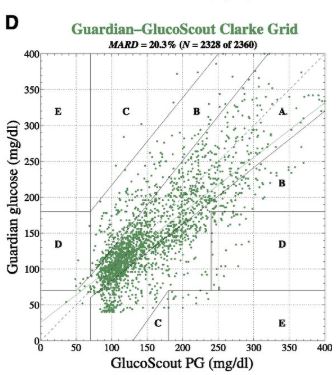

If we take readily available data for the Freestyle Libre from 2016:

And Medtronic Guardian from 2013:

You get the impression that the data from the Medtrum A6 falls somewhere between the two, but more importantly, that much more data is required to make a good comparison.

What’s also required is some testing in both Hypoglycaemic and Hyperglycaemic states. My 30-odd data points really don’t give too much away, other than that the period in which I tested gave some unexpectedly wide results for a normal glucose range.

MARD

As already mentioned, my equivalent of MARD, VfB, showed some dramatic differences between my two setups. As a reminder, the mean for the A6 is 12.4% and the median is 10.7%.

To give a wider view, these are the manufacturer stated MARD values from other systems, as mentioned in this article:

My VfB values seem to suggest similar performance to the old Medtronic Minilink based setups, or the Dexcom G4.

In answer to the question, would I loop off it then, I’ve run a loop off a Minilink and others run them off G4s without Share. I’m not convinced yet that the idiosyncrasies of its ability to follow the glucose trend are safe to loop with. It seems to be generally reporting a higher value than the underlying glucose consistently and I don’t think that’s a good thing.

The other question that arises is, at the price, is it worth it?

It’s certainly cheaper real-time CGM, but I’d like more data before drawing a conclusion on that.

Conclusions

The first and very clear conclusion is that 12 hours is not enough data to draw full conclusions. More data is needed, and can be acquired.

The second is that, with the above caveat in mind, the Medtrum A6 appears not to be as accurate/precise as the Dexcom G5 using xDrip+. Whether the use of xDrip+ with the A6 might improve this is an interesting question and one that should be considered for further research. There are, for example, many people looping using xDrip+ with the Libre and seeing much more accuracy with the xDrip algorithm and the ability to calibrate.

Finally, in relation to accuracy, given the variation I saw in this small sample, I’d be tempted to suggest that the calibration frequency for the A6 perhaps needs to be more frequent than once every 12 hours, similar to that which you really need for the Medtronic Enlite with Guardian 2. That’s another data set to collect.

With regard to overall use and longevity, performance over time, etc, I’ll report back data as and when I have it. Once I get towards the seven days of usage, and then 14, it will be interesting to see whether any of this changes, or indeed, if the sensor lasts that long.

Initial impressions are that while it’s not as accurate as WeAreNotWaiting’s take on Dexcom’s offering, it doesn’t appear too far off what many people experience with Medtronic offerings, but at a fraction of the cost, so while we have some initial conclusions and data, there is a long way to go.

Appendix – CGM/SMBG comparison data

Many thanks for a great assessment given the short time you have had. The variance above does seem a concern but I do wonder how much this will change as insertion trauma dies down. It may well be that, like the Libre, the second week is more accurate than the first.

I am hoping to run it concurrently with the Libre and it may be interesting to try very frequent calibration with the Libre as the calibration method. Interesting also to try the effects of preinsertion.

Unfortunately I am going to be out of country so it may well be 6 weeks or so before I can run it but the possibilities seem good. I did get in touch with them but no real news about the pump as yet.

The A6 CGM is in stock in the U.K. with an expected delivery of 3 days, so I would expect a few will be trying it.

Some interesting points you raise there Joe. The major difference between the Libre and the A6 is that the reality is that the Libre doesn’t require calibration. People’s experiences vary with that, but it generally results in a consistent Variation from Blood. That’s why the Dexcom should be a better comparison.

As you are required to calibrate the sensor, I would expect the calibration algorithm to include a variance to account for that trauma, as both the Dexcom and xDrip+ algorithms do. Sadly, it doesn’t appear as though that’s the case. That’s also the reason for including the Dexcom/xDrip+ algorithm as a comparison.

Regarding pre-insertion, I’ve treated the Medtrum as I treat a Dexcom sensor. Both had a six hour pre-insertion, and I generally expect some form of noise as a result over the following six hours. Normally the Dexcom will bounce around for a couple of hours, need an additional calibration and then it gets going nicely. As it was, the Medtrum appeared to settle more quickly than the Dexcom, but it’s a question of what it settled to.

I’m sure plenty will try it. A cheaper option on the CGM front is always welcome. I’d like to see what we can do with xDrip+ with it though. I think that might improve outcomes.

Thanks for this analysis. For me its so darn good. I am very interested in trying the A6. I find Dexcom too expensive. Anything close enough is good enough for me. Is there somewhere I can see what happened in the next few weeks and if you had any updates for me?

If you scroll to the bottom of the page, there are links to the two week and month analysis.